Posted by James Forshaw, Project Zero

This blog is a continuation of my series of Windows exploitation tricks. This one describes an exploitation trick I’ve been trying to develop for years, succeeding (mostly, more on that later) on the latest versions of Windows 10. It’s a trick to trap access to virtual memory, get feedback when it occurs and delay access indefinitely. The blog will go into some of the background for why this technique is useful, an overview of the research I did to find the trick as well as an overview of the types of vulnerabilities it can be used with.

Background

When would you need such an exploitation trick? A good example of the types of security vulnerabilities which can benefit can be found in the seminal Bochspwn research by Mateusz Jurczyk and Gynvael Coldwind. The research showed a way of automating the discovery of memory double-fetches in the Windows kernel.

If you’ve not read the paper, a double-fetch is a type of Time-of-Check Time-of-Use (TOCTOU) vulnerability where code reads a value from memory, such as a buffer length, verifies that value is within bounds and then rereads the value from memory before use. By swapping the value in memory between the first and second fetches the verification is bypassed which can lead to security issues such as privilege escalation or information disclosure. The following is a simple example of a double fetch taken from the original paper.

DWORD* lpInputPtr = // controlled user-mode address UCHAR LocalBuffer[256];

if (*lpInputPtr > sizeof(LocalBuffer)) { ① return STATUS_INVALID_PARAMETER; } RtlCopyMemory(LocalBuffer, lpInputPtr, *lpInputPtr);② |

This code copies a buffer from a controlled user mode address into a fixed sized stack buffer. The buffer starts with a DWORD size value which indicates the total size of the buffer. Memory corruption can occur if the size value pointed to by lpInputBuffer changes between the first read of the size value to compare against the buffer size ① and the second read of the size when copying into the buffer ②. For example, if the first time the value is read it’s 100 and the second it’s 400 then the code will pass the size check as 100 is less than 256 but will then copy 400 bytes into that buffer corrupting the stack.

Once a vulnerability such as this example was discovered Mateusz and Gynvael needed to exploit it. How they achieved exploitation is detailed in section 4 of the paper. The exploit techniques that were identified were all probabilistic. Exploitation typically required two threads racing each other, with one reading and one writing. The probabilistic nature of success is due to the probability that in between the first read from a memory location and the second read the writing thread sets a new value which exploits the vulnerability.

To widen the TOCTOU window many of the techniques described abuse the behavior of virtual memory on Windows. A process on Windows can typically access a large virtual memory region up to 8TiB size. This size is likely to be significantly larger than the physical memory in the system, especially considering the limit is per-process, not per-system. Therefore to maintain the illusion of such a large memory address space the kernel uses on-demand memory paging.

When memory is allocated in the process the CPU’s page tables are set up to indicate the presence of the memory region but are marked as invalid. At this point the virtual memory region has been allocated but there is no physical memory backing it. When the process tries to access that memory region the CPU will generate an exception, generally referred to as a page-fault, which is handled by the kernel.

The kernel can look up the memory address which was accessed to cause the page-fault and try and fix the address. How the page-fault is fixed depends on the type of memory access. A simple example is if the memory was allocated but not yet used the kernel will get a physical memory page, initialize it to zeros then adjust the page tables to map that new physical memory page at the faulting address. Once the page-fault has been fixed the faulting thread can be restarted at the instruction which accessed the memory and the memory access should now succeed as if it was always present.

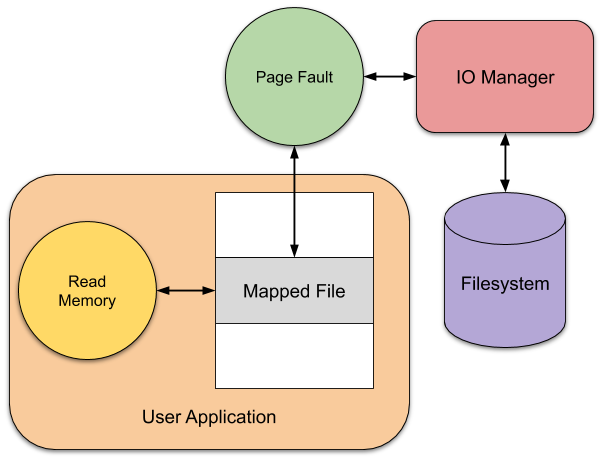

A more complex scenario is if the page is part of a memory mapped file. In this case the kernel will need to request that the page’s data is read back from disk before it can satisfy the page-fault. This can take quite a long time, at least for spinning rust disks, so it might require the faulting thread to be suspended while it waits for the page to be read. Once the page has been read the memory can be fixed up, the original thread can be resumed and the thread restarted at the faulting instruction.

The end result is it can take a significant amount of time, relative to a CPU’s native speed that is, to handle a page-fault. However, abusing these virtual memory behaviors only widens the TOCTOU window, it didn’t allow for precise timing to swap values in memory. The result is the exploitation techniques still came with limitations. For example, it was very slow if not impossible in some cases to exploit on a machine with a single CPU core as it relies on having concurrent threads reading and writing.

An ideal exploit primitive would be one where the exploitation window can be made arbitrarily large so that it becomes trivial to win the race. Taking previous experience and knowledge of existing bug classes my ideal primitive would be one which meets a set of criteria:

- Works on a default installation of Windows 10 20H2.

- Gives a clear signal when memory is read or written.

- Works when memory is accessed from both user and kernel mode.

- Allows for delaying memory access indefinitely.

- The data in the memory accessed is arbitrary.

- The primitive can be set up from a range of privilege levels.

- Can trap multiple times during the same exploit.

While meeting all these criteria would be ideal, there’s no guarantee we’ll meet all or any of them. If we only meet some then the range of exploitation vulnerabilities might be limited. Let’s start with a quick overview of the existing work which might give us an idea of how to proceed to find a primitive.

Existing Work

Having spoken to Mateusz and made an effort to look for any subsequent work there seems to be little novel work over and above the original Bochspwn paper on the exploitation of these types of TOCTOU issues. At least this is true for exploitation on Windows, however, novel techniques have been developed on other platforms, specifically Linux. Both of these techniques rely on the behavior of virtual memory I previously described.

The first technique in Linux makes use of Userfault File Descriptor (userfaultfd) to get notifications when page-faults occur in a process. With userfaultfd enabled a secondary thread in the process can read a notification and handle the page-fault in user mode. Handling the fault could be mapping memory at the appropriate location or changing page protection. The key is the faulting thread is suspended until the page-fault is handled by another thread. Therefore if a kernel function accessed the memory the request will be trapped until it's completed. This allows for a primitive where the memory access can be delayed indefinitely as well as having a timing signal for the access. Using userfaultfd also allows the fault to be distinguished between read and write faults as the memory page can be write-protected

Using userfaultdd works for in-process access such as from the kernel, but is not really useful if the code accessing the memory is in another process. To solve that problem you can use the FUSE file system as Jann Horn demonstrated in a previous Project Zero blog post. A FUSE file system is implemented entirely in user mode, but any requests for the file go through the Linux kernel’s Virtual File System APIs. As a file is accessed as if it was implemented by an in-kernel file system it’s possible to map that file into memory using mmap. When a page-fault occurs on a FUSE backed memory region a request will be made to the user-mode file system daemon which can delay the read or write request indefinitely.

Remote File Systems

As far as I can tell there’s nothing equivalent to Linux’s userfaultd on Windows. One feature which caught my eye was memory write watches. But those seem to just allow an application to query if memory had been written to since the last time it was checked and doesn’t allow memory writes to be trapped.

If we can’t just trap page-faults to virtual memory what about mapping a file on a user-mode filesystem like FUSE? Unfortunately there is no built-in FUSE driver in Windows 10 (yet?), but that doesn’t mean there’s no mechanism to implement a file system in user-mode. There are some efforts to make a real FUSE on Windows, such as the WinFsp project, but I’d expect the chances of them being installed on a real system to be vanishingly small.

The first thought I had was to try to exploit Multiple UNC Provider (MUP) clients. When you access a file via a UNC path, e.g. \\server\share\file.bin, this will be handled by a MUP driver in the kernel, which will pass it to one of the registered client drivers. As far as the kernel is concerned the opened file is a regular file (with some caveats) which generally means the file can be mapped into memory. However, any requests for the contents of that file will not be handled directly, but instead handled by a server over a network protocol.

Ideally we should be able to implement our own server, handle the read or write requests to a file mapping which will allow us to detect or delay the request so that we can exploit any TOCTOU. The following table contains only Microsoft MUP drivers that I identified. The table contains what versions of Windows 10 the driver is supported on and whether it’s something enabled by default.

Remote File System | Supported Version | Default? |

Everything | Yes (SMBv1 might be disabled) | |

Everything | Yes (except Server SKUs) | |

Everything | No | |

Windows 10 1903 | No (needs WSL) | |

Everything | Yes |

While MUP was designed for remote file systems there’s no requirement that the file system server is actually remote. SMB, WebDAV and NFS are IP based protocols and can be redirected to localhost. P9 uses a local Unix Socket which can’t be remoted anyway. The terminal services client sends file access requests back to the client system over the RDP protocol. For all these protocols we can implement the server with varying degrees of effort and see if we can detect and delay reads and writes to the file mapping.

I decided to focus only on two, SMB and WebDAV. These were the only two which are enabled by default and are trivially usable. While the Remote Desktop Client is in theory installed by default the RDP server is not normally enabled by default. Also setting up the RDP session is complex and might require valid authentication credentials therefore I decided against it.

Server Message Block

SMB is almost as old as Windows itself, having been introduced in Lan Manager 1.0 back in 1987. The latest SMB version 3.1 protocol only bears a passing resemblance to that original version having shed its NetBIOS roots for a TCP/IP connection. Its lineage does mean it’s the best integrated of any of the network file systems, with the MUP APIs being designed around the needs of SMB.

I decided to do a simple test of the behavior of mapping a file over SMB. This is fairly easy as you can access SMB on the same machine via localhost. I first created a 1GiB file on a local disk, the rationale being if SMB supports caching file data it’s unlikely to read something that large in one go. I then started Wireshark and monitored the loopback interface to capture the SMB traffic as shown below.

I then wrote a quick PowerShell script which will map the file into memory and then reads a few bytes from memory at a few different offsets.

Use-NtObject($f = Get-NtFile "\\localhost\c$\root\file.bin" -Win32Path) { Use-NtObject($s = New-NtSection -File $f -Protection ReadWrite) { Use-NtObject($m = Add-NtSection -Section $s -Protection ReadWrite) { $m.ReadBytes(0, 4) $m.ReadBytes(256*1024*1024, 4) $m.ReadBytes(512*1024*1024, 4) $m.ReadBytes(768*1024*1024, 4) } } } |

This just reads 4 bytes from offset, 0, 256MiB, 512MiB and 768MiB. Going back to Wireshark I filtered the output to only SMBv2 read requests using the display filter smb2.cmd == 8, and the following four packets can be observed.

Read Request Len:32768 Off:0 File: root\file.bin Read Request Len:32768 Off:268435456 File: root\file.bin Read Request Len:32768 Off:536870912 File: root\file.bin Read Request Len:32768 Off:805306368 File: root\file.bin |

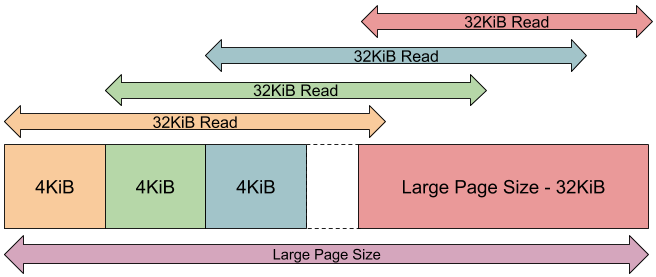

This corresponds with the exact memory offsets we accessed in the script although the length is always 32KiB in size, not the 4 we requested. Note, that it’s not the typical Windows memory allocation granularity of 64KiB which you might expect. In my testing I’ve never seen anything other than 32KiB requested.

All the bytes we’ve tested are aligned to the 32KiB block, what if the bytes were not aligned, for example if we accessed 4 bytes from address 512MiB minus 2? Changing the script to add the following allows us to check the behavior:

$m.ReadBytes(512*1024*1024 - 2, 4) |

In Wireshark we see the following read requests.

Read Request Len:32768 Off:536838144 File: root\file.bin Read Request Len:32768 Off:536870912 File: root\file.bin |

The accesses are still at 32KiB boundaries, however as the request straddles two blocks the kernel has fetched the preceding 32KiB of data from the file and then the following 32KiB. You might think that all makes sense, however this behavior turned out to be a fluke of testing.

The diagram above shows the structure of how mapped file reads are handled. When an address is read the kernel will request 32KiB from the closest 4KiB page boundary, not the 32KiB boundary. However, there’s then a secondary structure on top based on the supported size of large pages. If the read is anywhere within 32KiB of the end of a large page the read offset is always for the last 32KiB.

For example, on my system the large page size (as queried using the GetLargePageMinimum API) is 2MiB. Therefore if you start at offset 512MiB, between 512 and 514 - 32KiB the kernel will read 32KiB from the offset truncated to the closest 4KiB boundary. Between 514 - 32KiB and 514MiB the read will always request offset 514 - 32KiB so that the 32KiB doesn’t cross the large page boundary.

This allows reads at 4KiB boundaries, however the amount of data read is still 32KiB. This means that once one 4KiB page is accessed the kernel will populate the current page and 7 following pages. Is there any way to only populate a single native page? Based on a comment from Mateusz I tested returning short reads. If the SMB server returns fewer bytes than requested from the read then rather than failing it only populates the pages covered by the read. By returning these short reads we can get trap granularity down to the native page size except for the final 32KiB of a large page. If a read request is shorter than the native page size the rest of the page is zeroed.

What about writing? Let’s change the script again to call WriteBytes rather than ReadBytes, for example:

$m.WriteBytes(256*1024*1024, @(0xAA, 0xBB, 0xCC, 0xDD)) |

You will see a write request to the file in Wireshark, similar to the following:

Write Request Len:4096 Off:268435456 File: root\file.bin |

However, if you dig a bit deeper you’ll notice that the write only happens once the file is closed, not in response to the WriteBytes call. This makes sense, there isn’t any easy way to detect when the write happened to force the page to be flushed back to the file system. Even if there was a way flushing to a network server for every write would have a massive performance impact.

All is not lost however, before the memory is safe to write it must be populated with the contents from the file. Therefore if you look before the write you’ll see a corresponding read request for the 32KiB region which encompasses the write location which is synchronous with the read. You can detect a write through its corresponding read but you can’t distinguish read from a write at the protocol level.

All this testing indicates if we have control over the server we can detect memory access to the mapped file. Can we delay the access as well? I wrote a simple SMB server in .NET 5 using the SMBLibrary by Tal Aloni. I implemented the server with a custom filesystem handler and added some code to the read path which delays for 10 seconds when the file offset is greater than 512MiB.

if (Position >= (512 * 1024 * 1024)) { Console.WriteLine("====> Delaying at Position {0:X}", Position); Thread.Sleep(10000); Console.WriteLine("====> Continuing."); } |

The data returned by the read operation can be arbitrary, you just need to fill in the appropriate byte buffers in the read. To test the access times I wrapped the memory read requests inside a Measure-Command call to time the memory access.

Measure-Command { $m.ReadBytes(512*1024*1024 - 4, 4) } Measure-Command { $m.ReadBytes(512*1024*1024 - 4, 4) } Measure-Command { $m.ReadBytes(512*1024*1024, 4) } Measure-Command { $m.ReadBytes(512*1024*1024, 4) } |

To compare the access time a read request is made to a location 4 bytes below the 512MiB boundary and then at the 512MiB boundary. By making two requests we should be able to see if the results differ per-read. The results were as follows:

# Below 512MiB (Request 1) Days : 0 Hours : 0 Minutes : 0 Seconds : 1 Milliseconds : 25 ... # Below 512MiB (Request 2) Days : 0 Hours : 0 Minutes : 0 Seconds : 0 Milliseconds : 1 ... # Above 512MiB (Request 1) Days : 0 Hours : 0 Minutes : 0 Seconds : 10 Milliseconds : 358 ... # Above 512MiB (Request 2) Days : 0 Hours : 0 Minutes : 0 Seconds : 0 Milliseconds : 1 ... |

The first access for below 512MiB takes around a second, this is because the request still needs to be made to the server and the server is written in .NET which can have a slow startup time for running new code. The second request takes significantly less that 1 second, the memory is now cached locally and so there doesn’t need to be any request.

For the accesses above 512MiB the first request takes around 10 seconds, which correlates with the added delay. The second request takes less than a second because the page is now cached locally. This is exactly what we’d expect, and proves that we can at least delay for 10 seconds. In fact you can delay the request at least 60 seconds before the connection is forcibly reset. This is based on the session timeout for the SMB client. You can query the SMB client timeout using the following command in PowerShell:

PS> (Get-SmbClientConfiguration).SessionTimeout 60 |

A few things to note about the SMB client’s behavior which came out of testing. First the client or the Windows cache manager seem to be able to do some caching of the remote file. If you request a specific access when opening the file, such as GENERIC_READ | GENERIC_WRITE for the desired access then caching is enabled. This means the read requests do not go to the server if they’re previously been cached locally. However if you specify MAXIMUM_ALLOWED for the desired access the caching doesn’t seem to take place. Secondly, sometimes parts of the file will be pre-cached, such as the first and last 32KiB of the file. I’ve not worked out what is the cause, oddly it seems to happen more often with native code than .NET code, so perhaps it’s Windows Defender peeking at memory or perhaps Superfetch. In general as long as you keep your memory accesses somewhere in the middle of a large file you should be safe.

If you’ve run the example code you might notice a problem, running the example server locally fails with the following error:

System.Net.Sockets.SocketException (10013): An attempt was made to access a socket in a way forbidden by its access permissions.

By default Windows 10 has the SMB server enabled. This takes over the TCP ports and makes them exclusive so it’s not possible to bind to them from a normal user. It is possible to disable the local SMB server, but that would require administrator privileges. Still, it was worth verifying whether the SMB server approach will work even if we have to communicate with a remote server.

I did do some investigation into tricks I could use to get the built-in SMB server to work for our purposes. For example I tried to use the fact that you can set an Opportunistic Lock which will trap file reads. I used this trick to exploit a TOCTOU vulnerability in the LUAFV driver. Unfortunately the SMB server detects the file is already in a lock and waits for the OpLock break to occur before allowing access to the file. This made it a non-starter.

For testing you can disable the LanmanServer service and its corresponding drivers. If you wanted to use this on an arbitrary system you'd almost certainly need to connect to a remote server. I’ve released the example server code here, which can be repurposed, although it is only a demonstrator. It allows for read granularity of the native page size, which is assumed to be 4KiB. The server code should work on Linux but as of version 1.4.3 of SMBLibrary on NuGet there’s a bug which causes the server to fail when starting. There is a fix in the github repository but at the time of writing there’s no updated package.

How well does abusing the SMB client meet with our criteria from earlier? I’ve crossed out all the ones we’ve met.

- Works on a default installation of Windows 10 20H2.

- Gives a clear signal when memory is read or written.

- Works when memory is accessed from both user and kernel mode.

- Allows for delaying memory access indefinitely.

- The data in the memory accessed is arbitrary.

- The primitive can be set up from a range of privilege levels.

- Can trap multiple times during the same exploit.

Using the SMB client does meet the majority of our criteria. I verified that it doesn’t matter whether kernel or user mode code accesses the memory it will still trap. The biggest problem is it’s hard to use this from a sandboxed application where it would perhaps be most useful. This is because MUP restricts access to remote file systems by default from restricted and low IL processes and AppContainer sandboxes need specific capabilities which are unlikely to be granted to the majority of applications. That’s not to say it’s completely impossible but it’d be hard to do.

While our trick doesn’t really delay the memory read indefinitely, for our purposes the limit of 60 seconds based on the SMB session timeout is going to be enough for most vulnerabilities. Also once the trap has been activated you can’t force the memory manager to request the same page from the server. I tried playing with memory caching flags and direct IO but at least for files over SMB nothing seemed to work. However, you can specify your own base address when mapping a file so you could map different offsets in the file to the same virtual address by unmapping the original and mapping in a new copy. This would allow you to use the same address multiple times.

WebDAV

As SMB can’t be easily used locally, what about WebDAV? By default TCP port 80 is unused on Windows 10 so we can start our own web server to communicate with. Also unlike on Linux there’s no requirement for having administrator privileges to bind to TCP ports under 1024. Even if either of these were not the case the WebDAV client supports a syntax to specify the TCP port of the server. For example if you use the path \\localhost@8080\share then the WebDAV HTTP connection will be made over port 8080.

However, does the WebDAV client expose the right read and write primitives to allow us to trap on memory access? I wrote a simple WebDAV server using the NWebDav library to serve local files. Running the script but specifying the WebDAV server on port 8080 to open the 1GiB file I’m immediately faced with a problem:

Get-NtFile : (0xC0000904) - The file size exceeds the limit allowed and cannot be saved.

Just opening the file fails with the error code STATUS_FILE_TOO_LARGE. The reason for that can be found in one of many Microsoft Knowledge Base articles such as this one. There’s a default limit of 50MB (that’s decimal megabytes) for any file accessed on a WebDAV share because it used to be possible to cause a denial of service by tricking a Windows system into downloading an arbitrarily large file.

The reason this size limiting behavior is in place is why WebDAV isn’t suitable for this attack. If you resize the file to below 50MB you’ll find the WebDAV client pulls the file in its entirety to the local disk before returning from the file open call. That file is then mapped into memory as a local file. The WebDAV server never receives a GET or PUT request for reads/writes to the memory mapping synchronously so there’s no mechanism to detect or trap specific memory requests.

File System Overlay APIs

Abusing the SMB client does work, but it can’t be used locally on a default installation. I decided I need to look for another approach. As I was looking at Windows Filter Drivers (see last blog post) I noticed a few of the drivers provided a mechanism to overlay another file system on top of an existing one. I trawled through MSDN to find the API documentation to see if anything would be suitable. The three I looked at are shown in the table below.

File system | Supported Version | Default? |

Windows 10 1809 | No | |

Everything | Yes | |

Windows 10 1709 | Yes (except non-Desktop Server SKUs) |

By far the most interesting one is the Projected File System. This was developed by Microsoft to provide a virtual file system for GIT. It allows placeholder files to be “projected” into a directory on disk and the contents of those files are only “rehydrated” to a full file on demand. In theory this sounds ideal, as long as it would populate the file’s contents piecemeal we could add the delays when receiving the PRJ_GET_FILE_DATA_CB callback.

However a basic implementation based on Microsoft’s ProjectedFileSystem sample code would always rehydrate the entire file during file open, similar to WebDAV. Perhaps there’s an option I missed to stream the contents rather than populate it in one go but I couldn’t find it immediately. In any case the Projected File System is not installed by default making it less useful.

WOF doesn’t really allow you to implement your own file system semantics. Instead it allows you to overlay files from either a secondary Windows Image File (WIM) or compressed on the same volume. This really doesn’t give us the control we’re looking for, you might be able to finagle something to work but it seems a lot of effort.

That leaves us with the Cloud Files API. This is used by OneDrive to provide the local online filesystem but is documented and can be used to implement any file system overlay you like. It works very similar to the Projected File System, with placeholders for files and the concept of hydrating the file on demand. The contents of the files do not need to come from any online service such as OneDrive, it can all be sourced locally. Crucially after some basic testing it supports streaming the contents of the file based on what was being read and you could delay the file data requests and the reading thread would block until the read has been satisfied. This can be enabled by specifying the CF_HYDRATION_POLICY_PRIMARY hydration policy with the value CF_HYDRATION_POLICY_PARTIAL when configuring the base sync root. This allows the Cloud File API to only hydrate the file's parts which were accessed.

This seemed perfect, until I tested with the PowerShell file mapping script where it didn’t work, my cloud file provider would always be requested to provide the entire file. Checking the Cloud Filter driver, when a request is received for mapping a placeholder file, the IRP_MJ_ACQUIRE_FOR_SECTION_SYNCHRONIZATION handler always fully rehydrates the file before completing. If the file is not hydrated fully then the call to NtCreateSection never returns which prevents the file being mapped into memory.

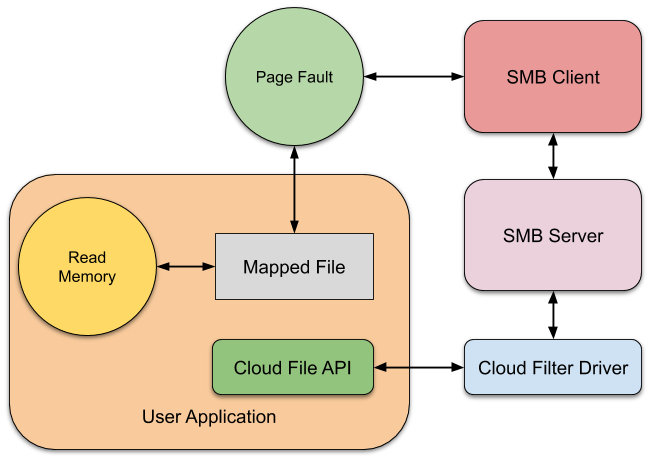

I was going to go back to doing my filter research until I realized I might be able to combine the SMB client loopback with the Cloud Filter API. I already knew that the SMB client doesn’t really map a file, even locally, instead it would read it on-demand via the SMB protocol. And I also knew that the Cloud Filter API would allow streaming of parts of the file on-demand as long as the file wasn’t being mapped into memory. The final setup is shown in the following diagram:

To use the primitive we first setup our own cloud provider by registering the sync root directory using the CfRegisterSyncRoot API configuring it with the partial hydration policy. Then a 1GiB placeholder can be created in the directory using CfCreatePlaceholders. At this point the file does not have any contents on disk. If we now open and map the placeholder file via the SMB loopback client the file will not be rehydrated immediately.

Any memory access into the mapping will cause the SMB client to make a request for a 32KiB block, which will be passed to our user-mode cloud provider, which we can detect and delay as necessary. It goes without saying that the contents of the file can also be arbitrary. Based on testing it doesn’t seem like you can force the read granularity down to the native page size like when implementing a custom SMB server, however you can still make requests at native page size boundaries within the large page size constraint. It might be possible to modify the file size to trick the SMB server into doing short reads but this behavior has not been tested. A sample implementation of the cloud provider is available here.

Usage Examples

We now have an exploitation trick which allows us to trap and delay virtual memory reads and writes. The big question is, does this improve the exploitation of vulnerabilities such as double fetches? The answer depends on the actual vulnerability. A quick note, when I use the word page I’m meaning the unit of memory which will cause a request to the SMB server, e.g. 32KiB not the native page size such as 4KiB.

Let’s take the example given at the start of this blog post. This vulnerability reads the value from the same memory address, lpInputPtr, twice. First for the comparison, then for the size to copy. The problem for exploitation is one of the limitations of the technique is the memory trap is one shot. Once the trap has fired to read the size for the comparison you can delay it indefinitely. However, once you provide the requested memory page and the faulting thread is resumed it won’t fire on the second read, it’ll just be read from memory as if it was always there.

You might wonder if you could remap the memory page when you detect the first read? Unfortunately this doesn’t work. When the thread is resumed it restarts at the faulting instruction and will perform the read again, therefore what would happen is the following:

As you can tell from the diagram you end up trapped in an infinite loop, as you remap a fresh page which just triggers another page fault ad infinitum. If you don’t perform step ③ then the operation will complete and there is a time window between resuming the thread, reading the now valid memory for the size comparison and the second read. However, in this example the time window is likely to be the order of a couple of instructions so using our exploitation trick isn’t better than the existing probabilistic approaches. That said one advantage is you do know when the read occurs which allows you to target the brute force window more accurately.

This example is the worst case, what if there was more time between the reads? Another example from a the Bochspwn paper is shown below:

PDWORD BufferSize = // controlled user-mode address PUCHAR BufferPtr = // controlled user-mode address PUCHAR LocalBuffer;

LocalBuffer = ExAllocatePool(PagedPool, *BufferSize);① if (LocalBuffer != NULL) { RtlCopyMemory(LocalBuffer, BufferPtr, *BufferSize);② } else { // bail out } |

The same double fetch behavior is present, however what’s different is the value is passed to another function, in this case ExAllocatePool which allocates kernel memory. Depending of the current memory configuration or how large the allocation requested there might be a significant time delay between ① and ②. Is there any way we can win the race?

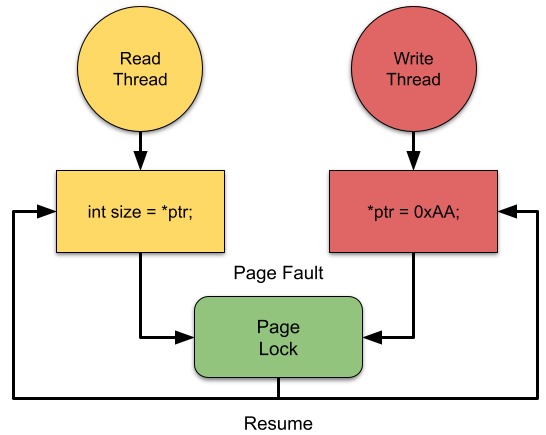

Well not that I know of, at least not deterministically. But we can exploit one behavior to try to synchronize the reading and writing threads a little. Recall that in order to write to an unresolved page the contents of the page must first be read from the server. Therefore, to maintain consistency any thread writes to the unresolved page must generate a page fault and wait on the same lock as another thread which is just reading from the page, as shown in the following diagram:

By synchronizing the reading and writing threads you’re giving yourself a reasonable chance of causing a write to happen during the time window for exploitation. This is still a probabilistic approach, it depends on the scheduler. For example, it’s possible that the write thread is woken before the read thread which will cause the pointer to always take the final value. Or the read thread could run to completion before the write thread is ever scheduled to run making the value never change. It’s possible there’s some scheduler magic such as using multiple reader or writer threads or by selecting appropriate priorities which you could exploit to guarantee read and write ordering. I’d be surprised if something is reliable across multiple Windows 10 systems. I’d be very interested in anyone who’s got better ideas on how to improve the reliability of this.

One approach you might be wondering about is unaligned access, say splitting the value across two separate pages. From a microarchitecture perspective it’s likely that the read will be split up into two parts, first touching one page then another. However, remember how the page fault works, it generates an exception which causes a handler to execute in the kernel. At this point any work the instruction has already done will have been retired while the kernel deals with the page fault. When the thread is resumed it will restart the faulting instruction, which will reissue the appropriate micro operations to read from the unaligned address. Unless the compiler generated two loads for the unaligned access (which might happen on some architectures) then there is no way I know of to restart the memory access instruction part of the way through.

This all seems slightly downbeat on the usefulness of the exploitation trick. Thing is, there’s as many different types of vulnerability as there are fish in the sea (if you’re reading this in 2100, I apologize for the acidification of the seas which killed all marine life, choose your own apocalypse-appropriate proverb instead). For example if we modify the original example as follows:

PDWORD lpInputPtr = // controlled user-mode address UCHAR LocalBuffer[256];

if (lpInputPtr[0] > sizeof(LocalBuffer) || lpInputPtr[1] != 2) { return STATUS_INVALID_PARAMETER; } RtlCopyMemory(LocalBuffer, lpInputPtr, *lpInputPtr); |

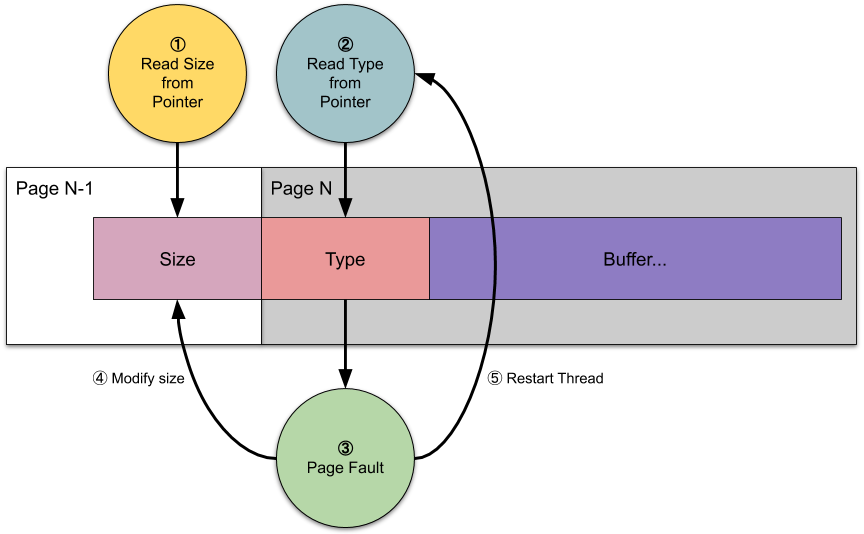

The check now ensures the buffer is large enough and a second DWORD in the buffer is not set to 2. The second field might represent the buffer type, and type 2 isn’t valid for this request. If you check the compiler output for this code, such as on Godbolt, the difference in native code is 2 or 3 instructions. This would seem to not materially improve the odds of winning the TOCTOU race when using a naïve probabilistic approach. But with our exploitation trick we can now build a deterministic exploit.

The diagram above shows how we can achieve this deterministic exploit. We can place the Size field on a different page to the rest of the input buffer, although the buffer is still contiguous in virtual memory. The first page (N-1) should already be faulted into memory and contain the Size field which is smaller than the LocalBuffer size. We can let the read for the size ① complete normally.

Next the code will read the Type field which is on page N ②. This page isn’t currently in memory and so when it’s accessed a page fault will occur ③. This requires the kernel to read the contents from the file, which we can detect and delay. When the read is detected we have as long as we need to modify the Size field to contain a value larger than the LocalBuffer size ④. Finally we complete the read, which will restart the thread back at the Type field read instruction ⑤. The code can continue and will now read the overly large Size field and cause memory corruption.

The key takeaway is that if between the double fetch points the code touches any user mode memory under your control which is not the one being double fetched it should be possible to convert that into a deterministic exploit. It doesn’t matter if the target system only has a single CPU, what the scheduling algorithm is in the kernel, how many instructions are between the double fetch points or what day of the week it is etc, it should “just work”.

The followup blog post on double-fetch exploitation gives some figures for exploitability. The examples shown up to now, when the right timing window is chosen the chance of success can hit 100% after some number of seconds. However, as shown here we can get 100% reliability on some classes of the same bug, but in the best case this isn’t an improvement other than it being deterministic.

All examples up to now only demonste the exploitation of what the blog post refers to as arithmetic races. The blog also mentions a second class of bug, binary races, which are harder to exploit and never reach 100% success. Let’s look at the example in the blog and see if our exploitation trick would do better.

PVOID* UserPointer = // controlled user-mode address __try { ProbeForWrite(*UserPointer, sizeof(STRUCTURE), 1);① RtlCopyMemory(*UserPointer, LocalPointer, sizeof(STRUCTURE));② } __except { return GetExceptionCode(); } |

On the face of it this doesn’t look massively different to previous examples, however in this case the destination pointer is being changed rather than the size. The ProbeForWrite kernel API which checks the pointer is both at a user-mode address and the memory is writable. This is a commonly used idiom to verify a user supplied pointer is not pointing into kernel memory.

If the pointer value is changed between ① and ② from a user mode address to a kernel mode address the example would overwrite kernel memory. The behavior is harder to exploit with a probabilistic exploit as there are only two valid values of the pointer, either a user-mode address or a kernel mode address. If you’re brute forcing the pointer value then it’s possible to end up where both fetches read a user-mode pointer even though it might change to a kernel pointer in between the fetches.

Fortunately, due to the call to ProbeForWrite this is trivial to exploit if you can trap on user memory access as shown in the following diagram:

From the diagram the first read from UserPointer is made ① and the resulting pointer value passed to ProbeForWrite. The ProbeForWrite API first checks if the pointer is in the user-mode address space, then probes each page of memory up to the size of the length parameter ②. If the page is invalid or is not writable then an exception will be generated and caught by the example's __except block. This gives us our exploit opportunity, we can use the exploitation trick on the one of the user-mode pages which is being probed which will cause ProbeForWrite to generate a page fault we can trap ③. However as the address being probed is not the same as the one storing the pointer we can modify it to contain a kernel mode address while the request is trapped ④. The result is we can deterministically win the race.

Of course I’ve been focussing on kernel double fetches as it’s what originally drew me to look for this behavior. There are many scenarios where this can be used to aid exploitation of user-mode applications. The most obvious one is where a service is sharing memory with a lower privileged application. An example of this sort of issue was a double-fetch in the DfMarshal COM marshaler. The COM marshaler shared a memory section between processes so it was possible to provide a section which exploited our trick. In the end this trick wasn’t necessary as the logic of the vulnerable code allowed me to create an infinite loop to extend the double fetch window. However if that didn't exist we could use this trick to detect and delay when the code was at the point where the handle could be switched.

Another more subtle use is where a privileged process reads memory from a less privileged process. This might be explicit use of APIs such as ReadProcessMemory or it could be indirect, for example querying for the process’ command line using NtQueryInformationProcess will read out memory locations under our control.

The thing to remember with this exploitation trick is it can be used to open up the window to win a timing race. In this case it’s similar to my previous work on oplocks, but instead for memory access. In fact the access to memory might be incidental to the vulnerable code, it doesn’t have to be a memory double fetch or necessarily even a TOCTOU vulnerability. For example you might be trying to win a race between two file paths with symbolic links. As long as the vulnerable code can be made to probe a user mode address we control then you can use it as a timing signal and to widen the exploitation window.

Conclusions

I’ve described an exploitation trick by combining SMB and the Cloud File API which can aid in demonstrating exploitation of certain types of the application and kernel vulnerabilities. It’s possible that there are other ways of achieving a similar result with APIs I haven’t looked at, but for now this is the best approach I’ve come up with. It allows you to trap on reads from user-mode memory, detect when the access occurs and delay the read for at least 60 seconds. Examples of code to implement the SMB and Cloud File API tricks are available here.

It’s worth just reiterating some more of the limitations of this exploitation trick before we conclude.

- Can’t be used in a sandbox, only from a normal user privilege.

- Only allows a one shot for any page mapped from the file. If something else (such as AV) tries to read that page or from the file then the trap may fire early.

- Can’t detect the exact location of a read, limited to a granularity of 4KiB. For local access via the Cloud File API this will always populate the next 7 pages as well as part of the 32KiB read. If accessing a custom SMB server the read size can be reduced to 4KiB. Would prevent exploitation of certain bugs which require precise trapping only on a small area within a larger structure.

- Can only detect writes indirectly, can’t specifically trap on a write.

From a practical perspective the trick presented here doesn’t significantly improve the win rates for traditional kernel double fetches outlined in the Bochspwn paper. Realistically for most of those classes of vulnerability you’d probably want to use a probabilistic approach, if anything due to its simplicity of implementation. However the trick is applicable to other bug classes where the memory trap is used as a deterministic timing signal adjunct to the vulnerability.

The one shot nature of the trick also makes it of no real benefit to exploiting simple double fetch code paths. Also more complex code which might read and write to a memory address more than once before you get to the vulnerable code which might make managing traps more difficult.

No comments:

Post a Comment